Cograph

A code-analysis tool that uses agentic AI to write its own documentation.

Open source

github / cograph

Cograph started with a problem I kept having and then stopped paying attention to. I'd come back to a codebase six months later and have no idea why I'd built a particular thing. The comments were stale, the README never matched the code, and reading commit history felt like archaeology. I wanted a tool that turned a codebase into a map I could walk, with an LLM doing the boring narration so I didn't have to.

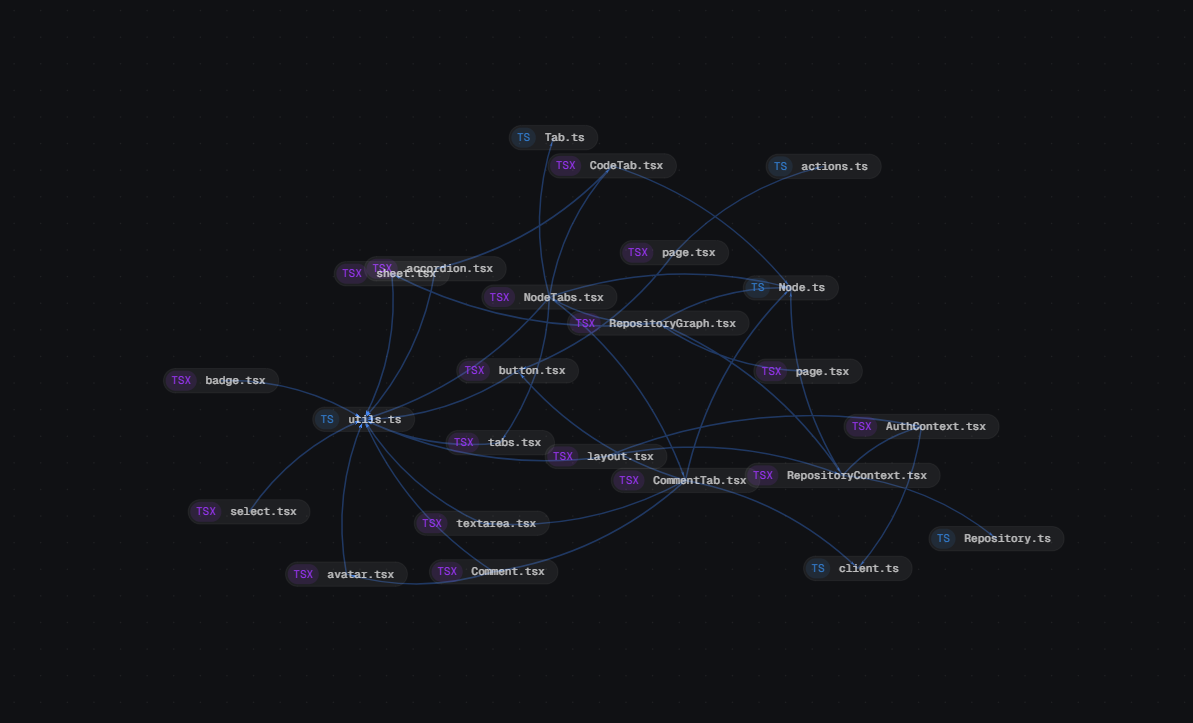

The thing it became: paste a GitHub repo URL, the system clones it, Claude walks every file and pulls out imports, exports and named entities, and the result lands in your browser as an interactive force-directed graph. Click a node to read the file. Click an annotation to add your own. The graph is the index page.

How it's built

Three apps in a monorepo. A NestJS API doing auth and graph queries, a Next.js front end with the canvas and a Monaco-powered file inspector, and a standalone MCP server that exposes the analysis pipeline as a small set of tools.

Splitting the analyser out into its own MCP server was the architectural decision that earned its keep, even if it took a rewrite to land there. The first version had the Claude calls baked into the API as a private implementation detail. After the refactor the analyser became its own deployable, and the API became one of its clients. The same pipeline now runs whether it's driven by an HTTP request from the web app or by a Claude Desktop session someone is running locally. The LLM that built the analyser can drive it. That demos differently than curl against an endpoint.

Two databases on purpose. Postgres for the relational data: repositories, files, AI summaries, annotations. Neo4j for the dependency graph itself. Force-graph rendering wants graph-shaped reads, and Cypher is the right tool for traversal queries; doing the same thing in Postgres ends in recursive CTEs nobody enjoys debugging. Benchmarking the traversal queries showed roughly a 12x performance difference between Cypher in Neo4j and recursive CTEs in Postgres. Enough to justify the architectural cost of the second database. Redis sits in front for queueing, Supabase handles auth.

The thing I deleted in April

Cograph spent its first five months as the wrong product. The original mental model was SaaS-shaped: an Organisation contains Projects, a Project contains Repositories, members have roles per Project. That's the right shape for a team product, and it was the shape I'd built before, so it was the shape my hands reached for. It wasn't right for this one.

Every analysis ran against a single repository. The Project layer was adding two clicks to reach the graph, joins on every query, an entire sidebar component, role-based access guards protecting a layer no user cared about, and a few thousand lines of code I had to maintain. So one Saturday I deleted all of it, and repositories became top-level objects belonging directly to a profile.

The pivot recognised that the product is the graph and the noun is "repository." Everything else was scaffolding I'd built because "obviously a team product needs orgs and projects." There were no teams. "Paste a repo URL, get a graph" turned out to be a much better pitch than "create an org, invite teammates, create a project, add a repo, then get a graph."

Working with an unreliable subprocess

The hard part of the pipeline wasn't the MCP plumbing or the graph rendering. It was getting reliable structured output out of the LLM. Asking Claude to "extract the imports from this file" returns prose by default, and prose isn't parseable. The first runs came back as valid-looking JSON about four times out of five and freeform commentary the rest, which is exactly the failure mode that wastes an afternoon.

The fix had three parts. A literal JSON schema in the prompt with enum-style type values. A retry wrapper with backoff, capped tightly enough that one flaky file can't stall a whole repo's analysis. And treating the response as untrusted input on the way back: parse, validate, fall back gracefully. The same care I'd give a third-party REST API.

The lesson, learned halfway through and not at the start: an LLM call is an API. Treat it like one.

Other things that fought back

Neo4j wants string IDs because the graph is shaped by file paths. Postgres wants UUIDs because rows move and paths rename. Reconciling the two is a small thing that surfaces in every feature touching both stores, and there's no clean answer. It's a tax I pay on every query that crosses them.

Storage was the other place I had to course-correct. Early on I'd mirrored every file's content into Postgres so the inspector had something to render. The database grew fast, the data went stale the moment anyone pushed to GitHub, and GitHub already serves raw file content for free. The inspector now fetches from raw.githubusercontent.com on demand, and Postgres only keeps what's worth keeping: metadata, summaries, annotations.

Then there's the long-running-job problem. Analyses take minutes for a real repo, and the first version of the UI just froze and offered no feedback. WebSockets would have been nicer in theory; in practice an AnalysisJob row with status and progress columns plus a polling hook every couple of seconds is unkillable, debuggable with curl, and good enough.

Ingestion at scale was the related problem. The first version walked the repo serially, which had the UI sitting on a spinner for minutes on a real-sized codebase. Now Octokit pulls files in parallel and the analyser streams partial graphs to the client as they come back rather than waiting for the whole repo to finish. A 250-file codebase lands in under a minute, and the graph fills in as the parser works through it.

Where it is now

Cograph started life as my final-year dissertation project at Staffordshire and kept going past the brief. It's open source and a little bit unfinished, in the way tools-for-yourself usually are. The next thing on the list is a "diff" mode that shows how the graph changes between two commits, which feels like the missing piece for using it on someone else's codebase. The thing after that is figuring out where this lives, if it lives anywhere at all.

What didn't work

errata- #1

Organisations and Projects as wrapper layers. I built the Org → Project → Repository hierarchy because it's the shape every team product has, and the shape my hands reached for. Five months in, every analysis still ran against a single repository, and the only thing the abstraction was protecting was itself. The pivot landed on a Saturday: organisations and projects gone, repositories promoted to top-level objects.

- #2

TypeDoc for API documentation. It went in early and quietly stopped earning its keep. The generated docs were a flat soup of files; nothing in them surfaced that the API was a NestJS app made of modules, providers, GraphQL types, and a dependency graph of its own. Compodoc understands all of that out of the box. Lesson: pick documentation tooling that understands your framework, not the most generic option.

- #3

Mirroring all source files into Postgres. The first version copied every file's content into the database so the inspector had something to render. The DB grew fast, the data went stale the moment anyone pushed, and GitHub already serves raw file content. The inspector now fetches from raw.githubusercontent.com on demand, and Postgres only stores what's worth storing: metadata, summaries, annotations.

- #4

Letting the LLM write summaries from raw source. First pass let Claude loose on entire files, with predictably mixed results: confident and frequently wrong. The agent loop now sees the structured output from the analysis step first and is constrained to comment only on entities the analysis already proved exist. The hallucinations dropped to a tolerable rate; the rest is human review through the annotations editor.